Artificial Intelligence and Cyber Frauds in India

Legal Challenges and Regulatory Response

Introduction

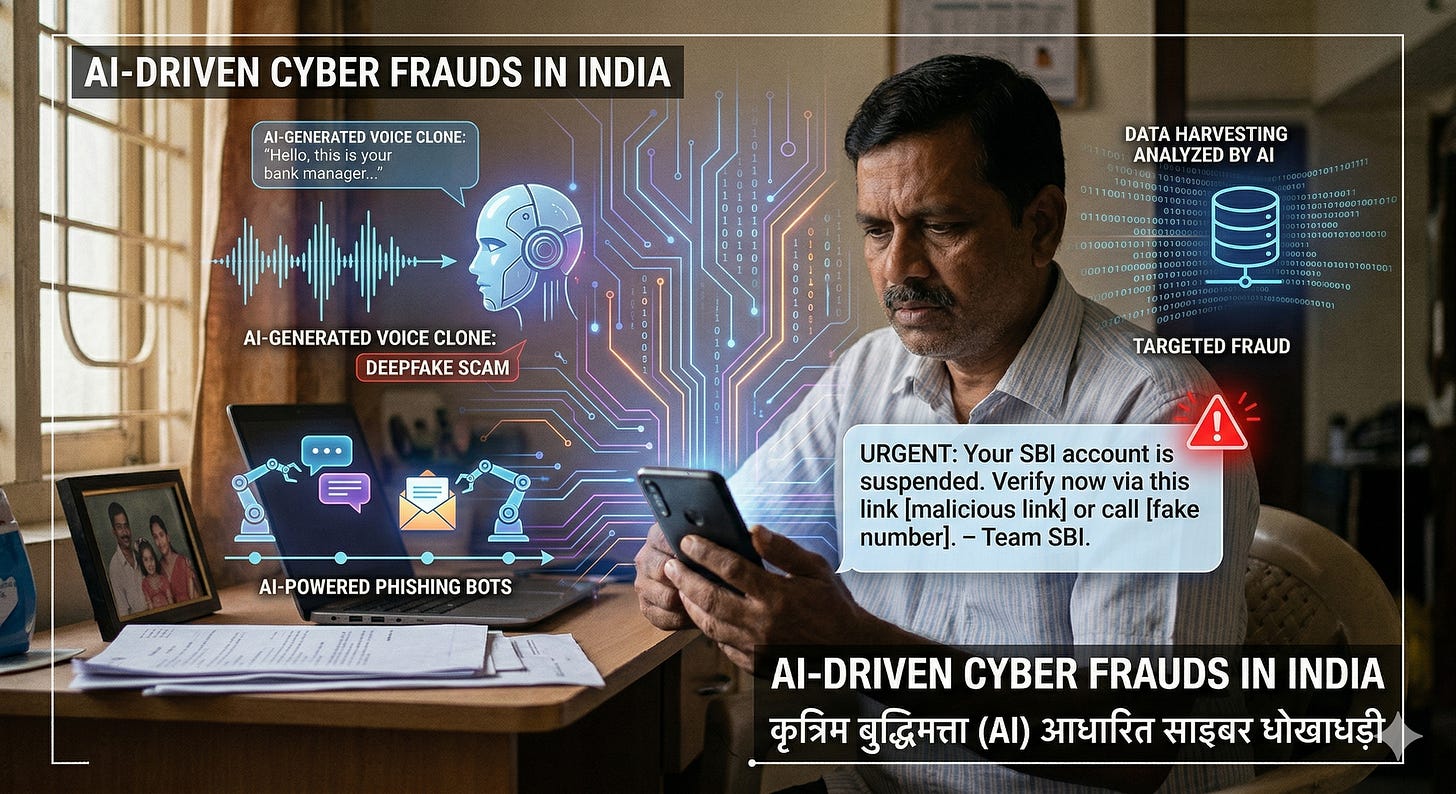

The rapid proliferation of Artificial Intelligence (AI) has transformed India’s digital ecosystem enhancing financial inclusion, governance, and e-commerce. However, the same technologies have also amplified the scale, sophistication, and anonymity of cyber frauds. AI-driven tools such as deepfakes, automated phishing bots, and synthetic identities are redefining the cybercrime landscape.

India, with one of the largest digital user bases globally, is particularly vulnerable. The convergence of AI and cybercrime necessitates a re-evaluation of legal frameworks, enforcement mechanisms, and regulatory policies.

India’s digital transformation over the past decade is nothing short of revolutionary. From the ubiquitous adoption of UPI at roadside stalls to the digitization of government services, the internet has deeply integrated into the daily lives of over a billion citizens. However, this hyper-connectivity has also created a vast, data-rich surface for cybercriminals.

Today, we are facing a new evolution in this threat landscape: the weaponization of Artificial Intelligence. It is completely understandable to feel overwhelmed or anxious when technology advances so quickly that seeing and hearing are no longer believing. But while the threats are highly sophisticated, understanding how they work and how Indian law is evolving to combat them is our strongest defence.

Nature of AI-Driven Cyber Frauds in India

AI has enabled cybercriminals to industrialize fraud through automation and personalization.

(a) Deepfake and Synthetic Media Fraud

AI-generated videos, audio, and images are increasingly used to impersonate individuals (e.g., CEOs, police officers). India has formally recognized such content as “Synthetically Generated Information (SGI)” in recent legal reforms.

Used in scams involving fake video calls or voice cloning

Facilitates identity theft and financial manipulation

(b) Digital Arrest Scams

A growing phenomenon in India involves criminals impersonating law enforcement officials.

Victims are coerced into transferring money under threat of arrest

Conducted via video calls and fake legal documents

This form of fraud is increasingly prevalent and psychologically manipulative.

(c) AI-Powered Phishing and Social Engineering

AI tools enable:

Hyper-personalized phishing emails/messages

Chatbot-based fraud interactions

Automated scam campaigns at scale

(d) Investment and E-commerce Fraud

AI is used to:

Create fake trading platforms

Generate realistic fake reviews and interfaces

Simulate legitimate financial ecosystems

A recent case in India involved a victim losing ₹3.6 crore through an AI-assisted online investment scam.

(e) Mule Accounts and Automated Fraud Networks

AI assists in:

Identifying vulnerable users

Managing large networks of fraudulent bank accounts

Automating money laundering chains

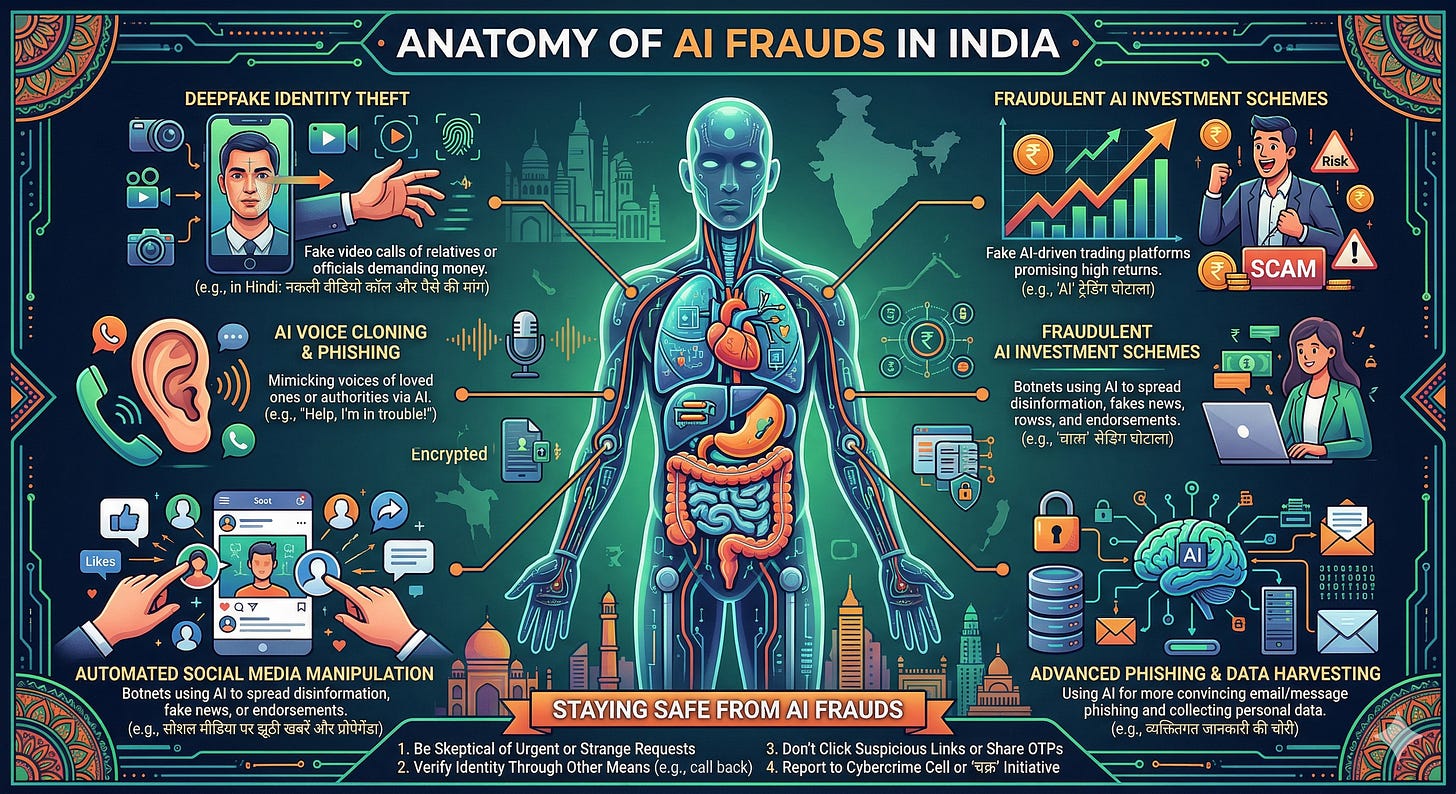

The Anatomy of AI Frauds in India

Cybercrime is no longer just about poorly worded emails asking for passwords. AI has democratized deception, allowing fraudsters to operate with unprecedented scale, speed, and realism. Bank fraud losses in India tripled to over ₹36,000 crore in FY25, driven in part by these next-generation scams.

The most prevalent AI-enabled frauds in India today include:

Voice Cloning and Familial Scams: Using just a few seconds of audio pulled from social media, scammers train AI models to clone a person’s voice. Victims receive panicked calls from what sounds exactly like their child, spouse, or parent claiming they have been arrested or are in a medical emergency, demanding immediate funds.

Deepfake “Digital Arrests”: A highly distressing trend where fraudsters use deepfake video technology to impersonate law enforcement officers (like Police Commissioners or CBI officials) or customs agents on video calls. They fabricate legal notices and coerce victims into transferring money to avoid a fake “digital arrest.”

Corporate “CEO Fraud”: Criminals use synthetic audio or video to impersonate top executives within a company, authorizing employees to urgently transfer funds to offshore accounts.

Automated Phishing & Identity Theft: AI algorithms are used to scrape massive amounts of personal data from the web. This data is fed into Large Language Models (LLMs) to craft highly targeted, error-free phishing messages that bypass traditional spam filters and manipulate human psychology.

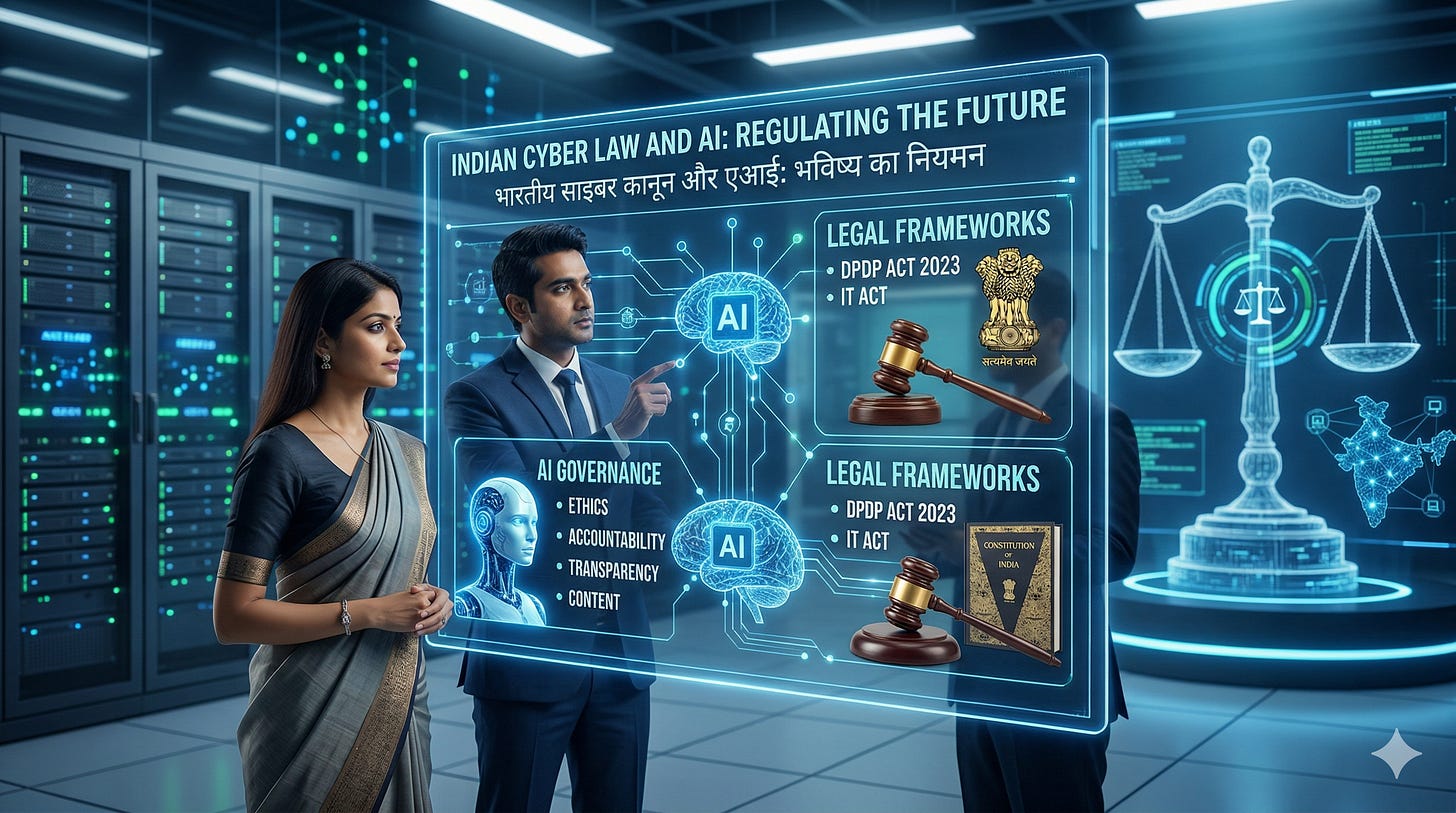

The Shield: Indian Cyber Law and AI

Indian cyber law is currently undergoing a massive structural shift to address these modern threats. While there is no single “AI Law,” a combination of foundational IT laws and newly implemented criminal codes forms the legal safety net.

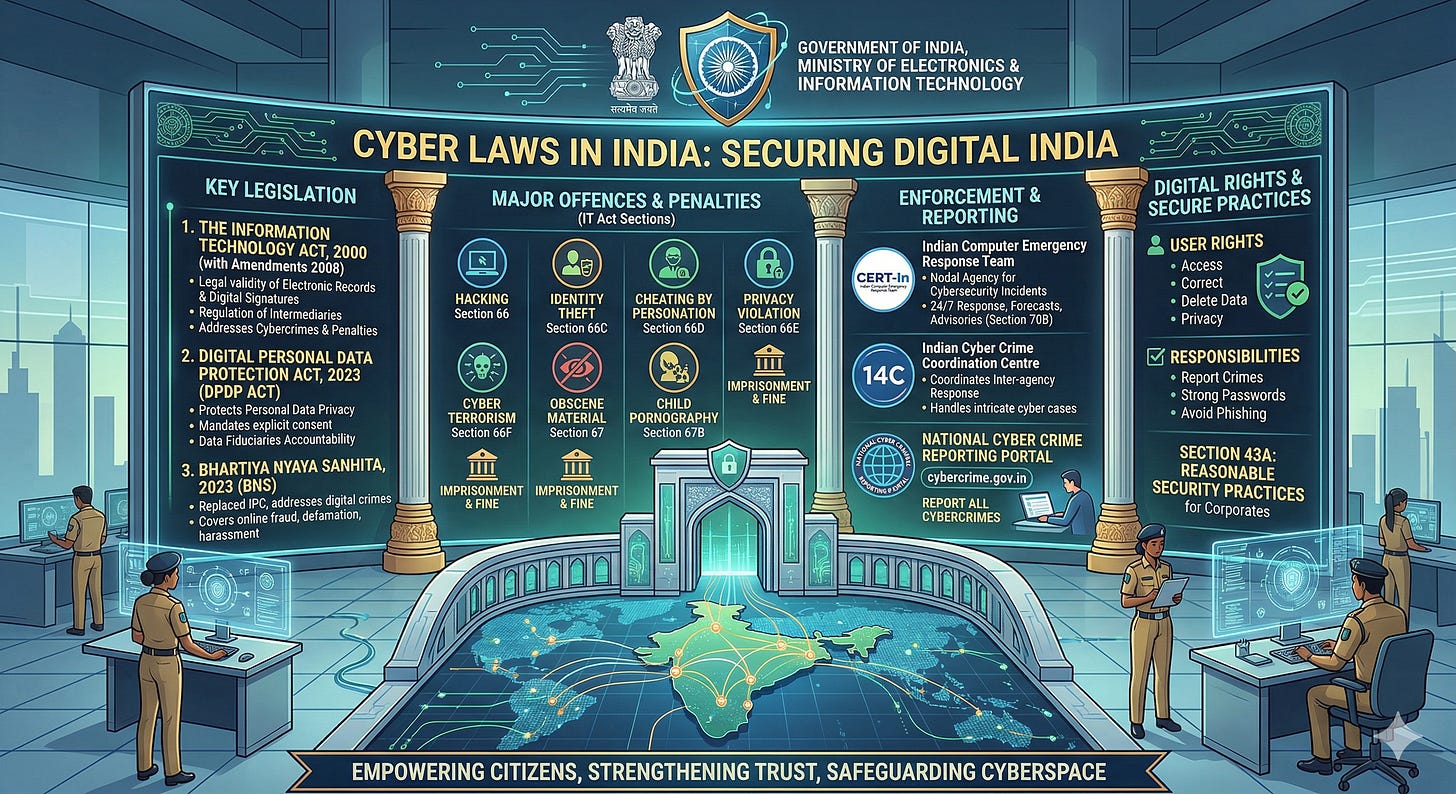

1. The Information Technology (IT) Act, 2000 & IT Rules

The IT Act remains the bedrock of cyber jurisprudence in India.

Section 66C (Identity Theft) & Section 66D (Cheating by Personation): These are the primary sections invoked when scammers use AI to impersonate someone else.

Section 66E (Violation of Privacy): Penalizes the capturing or sharing of private images without consent, often applied in cases of non-consensual deepfake pornography.

The IT Rules (2021/2023 Amendments): These rules place strict obligations on intermediaries (social media platforms). Platforms are legally mandated to take down content that impersonates others or utilizes deepfakes within 24 hours of a user complaint.

2. Bharatiya Nyaya Sanhita (BNS), 2023

Replacing the colonial-era Indian Penal Code (IPC), the BNS modernizes the approach to digital crimes:

Section 316: Deals with cheating and dishonestly inducing the delivery of property, directly applicable to financial cyber frauds.

Section 111: Specifically targets organized crime syndicates, including those operating large-scale cyber fraud rings.

Section 353: Aims to curb the spread of misinformation by penalizing false or misleading statements that cause public mischief—a crucial tool against malicious deepfake campaigns.

3. Digital Personal Data Protection (DPDP) Act, 2023

AI models require massive amounts of data to create accurate clones. The DPDP Act acts as a preventive shield by cutting off the “fuel” for these frauds.

It mandates strict data minimization, meaning companies can only collect what they absolutely need.

It enforces robust security safeguards and demands verifiable consent.

With penalties extending up to ₹250 crore for data breaches, the Act forces organizations to protect the personal data that cybercriminals otherwise use to train their deceptive AI models.

4. Bharatiya Sakshya Adhiniyam (BSA), 2023

Replacing the Indian Evidence Act, the BSA introduces modern standards for the admissibility of electronic and digital records. This is vital in the courtroom, where forensic validation is required to prove that a piece of audio or video evidence is an AI-generated fake rather than reality.

The Gray Areas: Where the Law Struggles

While the legal framework is robust, it is not without its challenges. The law, by nature, moves slower than technology.

Attribution of Liability: If an open-source AI tool is used to generate a fraudulent voice clone, who is liable? The developer of the tool, the user, or the platform hosting it? Indian law still struggles with defining shared liability in autonomous AI systems.

Lack of Explicit “AI” Definitions: Current statutes are technology-neutral. While this prevents the law from becoming instantly outdated, the lack of specific definitions for “algorithmic liability” or “autonomous agents” can lead to inconsistent enforcement.

The Deepfake Forensic Gap: Collecting evidence that satisfies the courts under the BSA requires advanced digital forensics. Local law enforcement agencies often face resource constraints when trying to scientifically prove AI manipulation.

Conclusion:

The fight against AI fraud is essentially “AI versus AI.” Cybersecurity agencies and platforms are increasingly deploying machine learning to detect deepfakes and block fraudulent transactions in real-time. The Indian Cyber Crime Coordination Centre (I4C) and the national 1930 cyber helpline are critical, citizen-facing mechanisms designed to freeze stolen funds rapidly.

Technology will continue to blur the lines of reality, but awareness is your best immediate defence. Verifying urgent financial requests through an alternate channel—no matter how authentic the voice on the other end sounds—is the new digital standard.

AI represents a double-edged sword in India’s digital transformation. While it drives innovation and efficiency, it simultaneously empowers cybercriminals with unprecedented tools. India’s legal framework—anchored in the IT Act and evolving through recent amendments—has begun adapting to these challenges.

However, the future demands a proactive, technology-neutral, and globally harmonized legal regime, combined with robust enforcement and public awareness. Only then can India effectively mitigate AI-driven cyber fraud while sustaining its digital growth trajectory.